Bridging the AI-UX Divide: Responsible AI Design through Human-Centered Collaboration

Presented by Hari

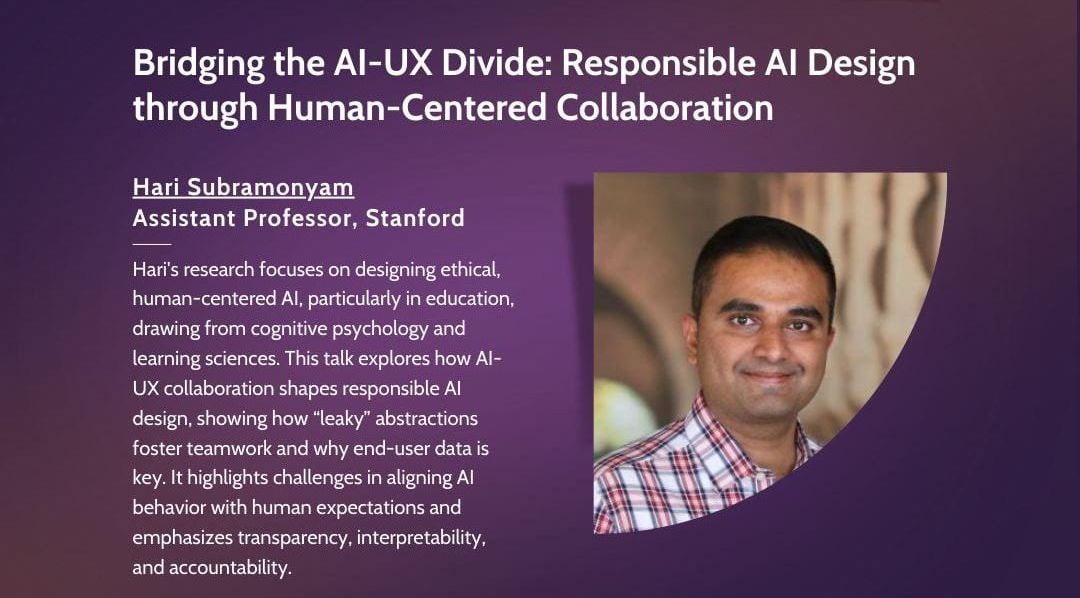

Hari’s research looks at enabling multidisciplinary teams to design and develop ethical, responsible, and human-centered experiences with AI. He applies this to AI in education, drawing from cognitive psychology and learning sciences to enhance learning. He is also the Ram and Vijay Shriram Faculty Fellow at Stanford’s Institute for Human-Centered AI.

Abstract

In traditional software development, UX design and engineering are distinct: designers create specs, and engineers build them. AI blurs this line, as systems evolve dynamically with data and user interactions. In this talk, I’ll explore how collaboration at the AI-UX boundary shapes responsible AI design. Drawing from industry studies, I’ll show how “leaky” abstractions encourage cross-disciplinary teamwork and why end-user data is crucial in both AI and UX design. I’ll discuss challenges in aligning AI behavior with human expectations, emphasizing transparency, interpretability, and accountability. Finally, I’ll present insights from generative AI prototyping and share practical tools for integrating responsible AI principles into UX workflows.